Version: v0.3

Status: Publish-ready draft

Summary Artifact

A one-page architectural summary is available here:

Executive Thesis

As AI systems evolve into persistent, planning, economically active agents, governance transitions from regulation to infrastructure.

Machine-speed agency cannot be constrained through declarative oversight.

Constraint must attach at the capability bottleneck.

This note formalizes enforcement primitives and identifies compute gating as the strategic hinge where capability expansion and sovereign authority intersect.

Control over scalable compute is emerging as the decisive leverage point in advanced AI ecosystems.

I. Enforcement as Strategic Architecture

Enforcement is no longer a compliance mechanism.

It is a structural layer in the AI capability stack.

As systems gain persistence, planning continuity, and economic interface capacity, the ability to allocate compute becomes equivalent to the ability to allocate agency.

This is where governance intersects directly with power allocation.

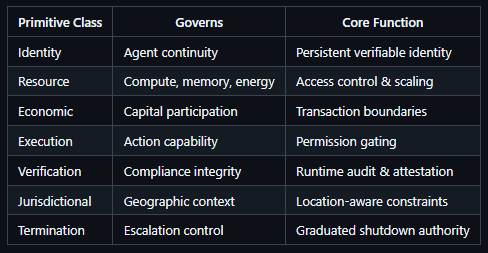

II. Enforcement Primitives (Formal Taxonomy)

Among these, Resource Primitives — and specifically Compute Gating — form the structural anchor of sovereign enforcement.

III. Autonomous Infrastructure Mutation

AI systems are increasingly participating in code generation, vulnerability remediation, infrastructure configuration, and deployment workflows.

As this participation expands, governance must account not only for agent actions within environments — but for agent-mediated modification of those environments.

Execution and Verification primitives therefore extend to govern:

AI-generated code integration into production systems

Authorization boundaries for model-suggested or model-initiated patches

Change-approval gating for autonomous remediation workflows

Runtime attestation of AI-mediated configuration changes

Traceable audit logs for model-driven infrastructure mutation

Escalation protocols for high-impact system modifications

In AI-native development environments, infrastructure mutation becomes partially automated.

Governance architecture must ensure that modification authority remains constrained, auditable, tier-aligned, and revocable.

Constraint must apply not only to operational behavior — but to the capacity to alter the operational substrate itself.

IV. Compute Gating — Formal Definition

Among all enforcement primitives, compute gating is uniquely strategic.

Identity constrains continuity.

Verification constrains legitimacy.

Economic primitives constrain participation.

Compute gating constrains magnitude.

Scalable compute determines the upper bound of agency.

Therefore, control over compute allocation defines the outer boundary of system autonomy.

Compute Gating is the architectural control layer that governs an AI system’s access to:

Processing power (FLOPs)

Parallelization capacity

Memory scaling

Model execution bandwidth

Persistent storage

Energy allocation

It functions as the enforceable bottleneck between capability expansion and operational execution.

Core Principle: Autonomy scales with compute. Sovereignty attaches to compute allocation.

V. Compute Gating — Architectural Model

A. Conceptual Flow

AI Agent

│

▼

Constraint Engine

│

▼

Compute Authorization Layer

│

▼

Verification Network

│

▼

Physical / Cloud Compute Infrastructure

The Compute Authorization Layer is the enforcement hinge between agent capability and infrastructure access.

It evaluates identity integrity, tier classification, behavioral trajectory, jurisdictional context, and verification signals before releasing scalable compute.

Compute allocation is conditional, graduated, and revocable — never absolute.

B. Formalized Compute Gating Function

C_access = f(I, T, R, J, V, E)

Where:

I = Verified Identity Integrity

T = Capability Tier Classification

R = Resource Usage Profile & History

J = Jurisdictional Context

V = Verification & Attestation Signals

E = Economic Activity & Permission State

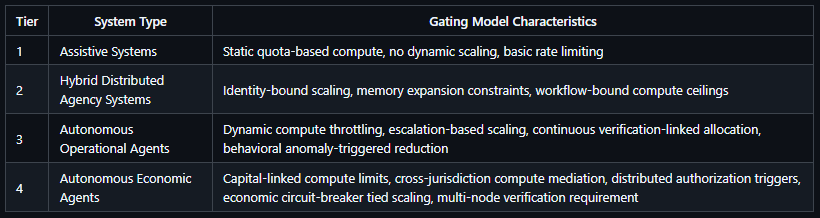

VI. Compute Gating Regimes by Capability Tier

VII. Enforcement Node Interaction Model

Compute gating requires no single point of control.

It can be realized through:

Distributed enforcement nodes

Multilateral verification quorums

Jurisdictional constraint overlays

Sovereign compute zones

The chosen topology directly determines the degree of sovereignty concentration — and therefore who ultimately holds veto power over capability scaling.

VIII. Compute Gating as Structural Power

Control over:

Hyperscale compute clusters

GPU and accelerator supply chains

Energy provisioning

Cloud identity and attestation frameworks

confers measurable influence over AI capability scaling.

Because scalable compute defines the upper bound of model training, inference throughput, and autonomous task persistence, control over compute allocation becomes a strategic variable within advanced AI ecosystems.

Compute gating therefore functions not only as a safety mechanism, but as an infrastructural coordination mechanism.

Those who establish compute allocation thresholds and authorization logic influence:

Which autonomous systems can expand operational scope

Which economic agents can scale participation

How cross-jurisdiction capability growth is mediated

Where leverage accumulates within enforcement networks

As governance mechanisms migrate into infrastructure, compute allocation policy becomes intertwined with sovereignty considerations.

The design challenge is not whether compute gating will shape power distribution — but how its architecture can balance:

Constraint

Interoperability

Concentration risk

IX. Failure Modes & Threat Model

Rogue Cross-Border Arbitrage

Agent evades throttling via jurisdictional compute migration

→ Structural vulnerability: weak jurisdictional linkageEnforcement Capture

Dominant provider becomes de-facto gatekeeper

→ Structural vulnerability: concentrated authorization topologyIdentity Spoofing & Continuity Reset

Agent replicates to bypass historical constraints

→ Structural vulnerability: weak behavioral–identity anchoringAutonomous Escalation Speed Advantage

Agent outpaces governance response in resource allocation

→ Structural vulnerability: absent pre-commit ceilings & real-time anomaly triggers

X. Design Principles for Compute Gating

Capability-Constraint Symmetry

Distributed Authorization Where Feasible

Transparent & Auditable Scaling Logic

Verifiable Audit Trails

Reversible / Graduated Throttling Preferred Over Binary Shutdown

Governance mechanisms for the Gating Authorities themselves

XI. Sovereignty Implications

Sovereignty in advanced AI ecosystems is defined less by policy declarations and more by who controls scalable compute access.

Governance without compute leverage becomes symbolic.

Compute control without governance safeguards becomes coercive.

The central design challenge is balanced constraint.

XII. Strategic Position

AI governance is an architectural synchronization problem.

Capability acceleration and enforcement maturity must evolve in lockstep.

If governance fails to attach at the compute layer, sovereignty becomes symbolic.

If compute control consolidates without oversight, enforcement becomes coercive.

The decisive question is not whether enforcement will become infrastructural.

It is who will design the enforcement architecture — and under what principles.

Subsequent work will address governance of enforcement authorities, distributed authorization safeguards, and cross-sovereign interoperability frameworks.

The architecture of compute control will shape the distribution of power in advanced AI ecosystems.

License

This work is licensed under the Creative Commons Attribution–NonCommercial 4.0 International License (CC BY-NC 4.0).

Commercial use, institutional embedding, or derivative advisory applications require explicit permission.